p style=”text-align: center;”>

We all know that A/B testing is one of the best ways to improve your conversion rate. By applying the scientific method – exposing your variation to a subset of your website visitors while maintaining a control group – you can determine what the impact of your change is likely to be.

At FIRST we’ve run hundreds of A/B tests and improved conversion rates for dozens of clients. We also subscribe to many of the leading conversion publications to keep track of the latest research and case studies of winning tests. However, we often find that these tests are declared ‘winners’ even when they might not be.

To illustrate this, here’s an example from a test we recently ran for one of our clients…

This test ran on one of New Zealand’s highest traffic websites and consisted of the original web page plus three variations. After one week the test had shown to thousands of visitors and each variation had resulted in hundreds of conversions. The variation shown in the red line was winning by 6.9% and our A/B testing tool gave this result a statistical confidence of 99%. Clear winner, right?

Wrong. We continued running this test for a few more weeks and look at what happened to the results…

The ‘winning’ variation, represented by the red line, continued to converge with the other variations until there was almost no difference between them. After six weeks the variation we initially thought was the winner was only 0.9% up on the original at 76% confidence.

The implications of this are important to realise. Significant agency and client resources can be invested in uncovering customer insights, designing new variations, running tests and hard coding winning variations, yet if results are not interpreted properly then it all falls over.

Part of the problem lies in the time it can take to realise a true, statistically valid outcome. We’re all impatient to see results, particularly for a test we’re excited about. However, most tests require more time than they are given to reach their conclusion. This is particularly the case in a country like New Zealand where a smaller population means that many sites have limited traffic.

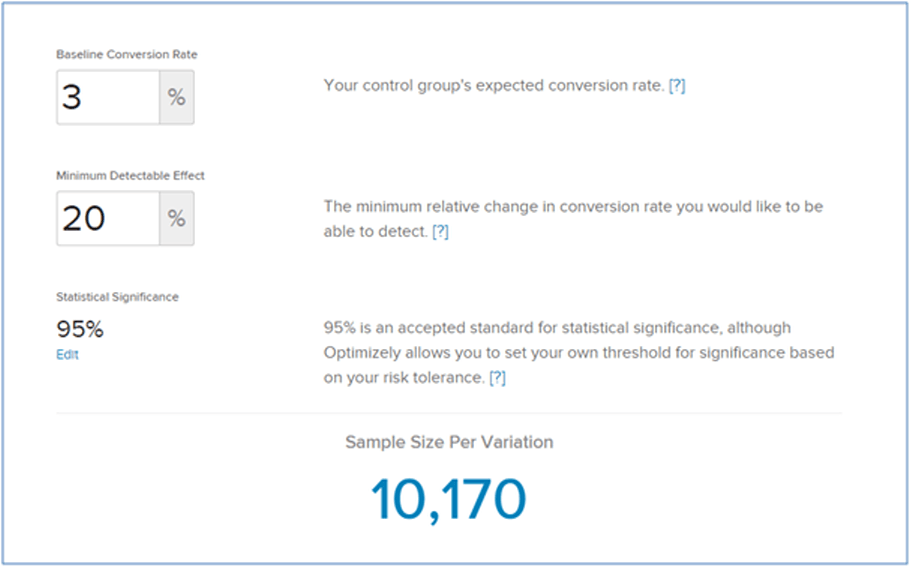

Use a sample size calculator

To see how long your test needs to run, use a tool like Optimizely’s sample size calculator. Then use your web analytics system to determine how many visitors are likely to be exposed to your test and how many days it will take to reach your required sample size.

If the required sample size is too large, don’t worry. There are ways to design your tests that will help you to get results much, much faster:

1. Test big changes

Instead of tweaking one thing on your page, create a brand new page that incorporates many changes at once. Big, bold changes will generate results much faster and can improve your conversion rates in leaps instead of increments.

2. Test on pages closer to your conversion end point

In many cases the home page is simply too far away from your end conversion point for your variation to make a big enough difference. Instead, test pages in your purchase funnel such as your shopping cart or payment page.

3. Test things that are more likely to influence conversion

Try to find those elements of your website that really drive conversion. For example, visitors are probably more concerned about ‘free delivery’ than the colour of your button, so find and test these elements instead.

4. Track micro-conversions

Instead of basing an experiment on how the variation ultimately influences purchases, why not base it on how well it moves the visitor one step further down the funnel? You are more likely to see the influence of your variation on goals that are closer to where the test takes place.

5. Track groups of goals together

If you have a contact page with a contact form, email addresses and social media buttons, don’t track these individually. Instead create a single goal that is triggered by clicks on any of these elements. That way, you’ll consolidate the impact of the test on one single goal instead of diluting it among many.

6. Filter out visitors not exposed to your variation

Testing a pop-up window? Then make sure visitors are only opted into the test when they click the button that opens the window – not before. This will stop you diluting your results with visitors that aren’t actually get influenced by your variation. Use precise URL targeting or specific techniques provided by your A/B testing platform to activate experiments exactly when you need.

7. Accept more risk

A confidence level of 95% is considered the industry benchmark. However, decreasing the confidence level will reduce the time it takes to run a test. Consider whether this increased risk is compensated by the increased speed at which you can run and call tests.

FIRST are Conversion Experts

Regardless of the amount of traffic on your site, a smart conversion optimisation consultant will be able to design tests that maximise impact with minimal time. If you’re interested in taking your A/B testing programme to the next level, contact the team at FIRST today for a free no-obligation quote.